In the first solution, I implemented an n-step semi-gradient TD(0) algorithm to determine the action at each state. I solved the problem 3 times using different techniques: However, the car’s engine is not strong enough to drive directly up the mountain, so it must drive back and forth up to build up momentum. In MountainCar, the agent tries to drive a car up the mountain to the right starting from the valley below. I coded an agent to solve the MountainCar problem from OpenAI Gym. To estimate the policy function, I trained a neural network in pytorch with linear layers and ReLUs to predict the best action based on the current state.

My agent uses the REINFORCE policy-gradient learning algorithm, with a baseline function. The agent gets a reward of +1 for every timestep that it keeps the pole upright. In CartPole, a pole is balanced atop a cart and the agent moves the cart to prevent the pole from falling. I coded an agent to solve the CartPole problem from OpenAI Gym. I also wrote an in-depth review of what I learned from the textbook, and posted my detailed notes on my bookshelf. Below are two selected projects from the course. In our Reinforcement Learning class, we read the textbook Reinforcement Learning by Sutton and Barto and implemented several RL algorithms to solve problems from the book. This allows our agents to detect the puck location and drive behind it to dribble the puck into the opponent’s goal.Īssignment details. This strategy is built around the idea that one agent with perfect information would perform better than two agents with imperfect information.Įach agent processes its camera input using a fully convolutional network that we designed similar to the U-Net architecture with residual connections from ResNet. Our general strategy was to have one agent focus on scoring the puck into the goal, and leave the other agent to focus on tracking the puck location as accurately as possible and communicating this information to the first agent. The instructors ran a tournament between every team’s agents and we finished in 3rd place among 16 teams. In a team with three other classmates, we built two autonomous hockey-playing agents in the SuperTuxKart game to score goals against opposing teams from our class. I coded a controller to handle the steering, acceleration, braking, and drifting of the kart based on this predicted aim point.Īssignment details. The model is built in pytorch and is a fully convolutional network using an encoder-decoder structure with batch normalization to process the image and predict an “aim point”, which is an xy-coordinate of where the kart should drive. I built an agent which uses computer vision to drive a go-kart autonomously in the SuperTuxKart game using only the screen image as input it has no other knowledge about the game. In our Deep Learning class, we built deep neural networks in pytorch to develop vision systems for the racing simulator SuperTuxKart, which is an open-source clone of Mario Kart. Below is a selection of projects from my coursework. In my Master’s of Computer Science at the University of Texas at Austin, I completed coursework focused on deep learning, computer vision, reinforcement learning, parallelization, linear algebra, and optimization. ▶ Plenty of Game modes: The game also features additional game modes besides normal races : time trials, follow-the-leader, soccer, capture-the-flag and two types of battle mode.Machine Learning projects at the University of Texas at Austin From the beaches of sunny islands to the depth of an old mine, from the streets of Candela City to peaceful countryside roads, from a spaceship to the mountains, you have much to explore and discover. ▶ Plenty of tracks and biomes: Its 21 tracks will take you in varied environments. It features Grand Prix where the goal is to get the most points over several races. ▶ Single and Multiplayer: online multiplayer mode, a local multiplayer mode, and a single player mode against AIs with both custom races and a story mode to complete to unlock new karts and tracks. SuperTuxKart offers a huge amount of content including over 20 tracks, over 15 characters and 7 game modes. “Beat the evil Nolok by any means necessary, and make the mascot kingdom safe once again” as you try to win the game alone, or race against up to 8 friends on one PC in shared screen mode or against anyone around the world with online or LAN network multiplayer.

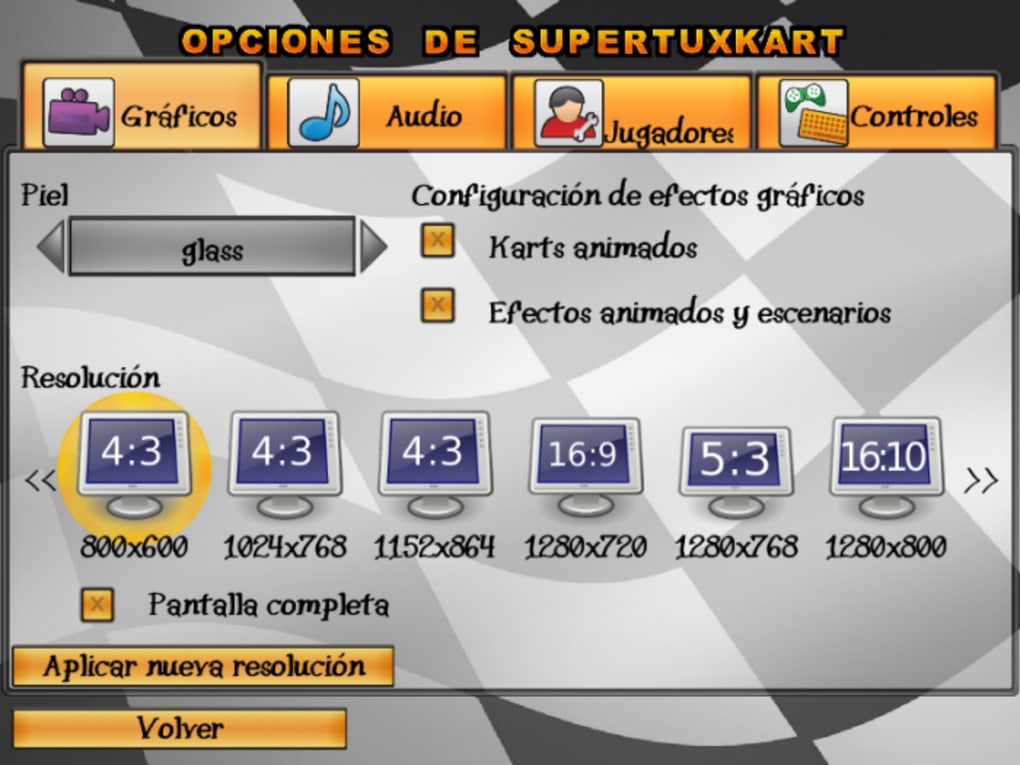

SuperTuxKart is a free, open source single & multiplayer 3D Mario kart-like racing game kindly developed by the SuperTuxKart Team for Windows, Linux, Mac OSX, Android and iOS operating systems.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed